Dear friends, today we will see what is the Kubernetes components in kubernetes cluster. We will also discuss about master and node components. So, let’s start and see step by step these topics.

Kubernetes Components

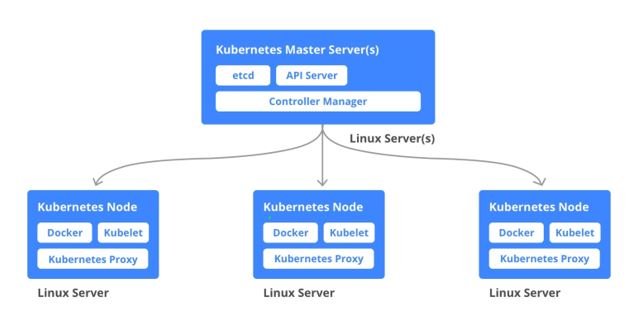

Kubernetes can largely be divided into Master and Node Components. There are also some add-ons such as the Web UI and DNS that are provided as a service by managed Kubernetes offerings (e.g. GKE, AKS, and EKS).

For more details about kubernetes components you can

Master Components

Ace components universally screen the cluster and react to cluster occasions. These can incorporate planning, scaling, or restarting an unfortunate unit. Five components make up the Ace components: Kube-API server, etcd, Kube-scheduler, Kube-controller-manager, and cloud-controller-manager.

Kube-API server

REST API endpoint to serve as the frontend for the Kubernetes manage plane. The Kubernetes API server legalizes and configures records for the API objects which include pods, services, replication controllers, and others. The API Server services REST operations and provide the frontend to the clusters shared state through which all other components interact.

Etcd

key-esteem store for the group information (viewed as the single wellspring of truth). Etcd is a conveyed, predictable key-esteem store utilized for design the board, administration disclosure, and planning circulated work. With regards to Kubernetes, etcd dependably stores the arrangement information of the Kubernetes bunch, speaking to the condition of the group (what hubs exist in the group, what pods ought to be running, which hubs they are running on, and a ton more) at some random purpose of time. As all bunch information is put away in etcd, you ought to consistently have a reinforcement plan for it. You can without much of a stretch back up your etcd information utilizing the etcd or CTL preview spare order. On the off chance that you are running Kubernetes on AWS, you can likewise back up etcd by taking a depiction of the EBS volume.

Kube-scheduler

Its observes new remaining tasks at hand/cases and relegates them to a hub dependent on a few planning factors (asset limitations, hostile to proclivity rules, information region, and so forth). The Kubernetes scheduler is an arrangement rich, topology-mindful, the remaining task at hand explicit capacity that fundamentally impacts accessibility, execution, and limit. The scheduler needs to consider individual and aggregate asset necessities, nature of administration prerequisites, equipment/programming/strategy requirements, and fondness and against proclivity details, information area, between the remaining task at hand impedance, cutoff times, etc. The remaining task at hand explicit prerequisites will be uncovered through the API as fundamental.

Kube-controller-manager

a central controller that observes the hub, replication set, endpoints (administrations), and benefits accounts. The Kubernetes controller supervisor may be a daemon that inserts the centre control circles transported with Kubernetes. In applications of robotics and mechanization, a control circle may be a non-terminating loop that controls the state of the framework. In Kubernetes, a controller may be a control circle that watches the shared state of the cluster through the API server and makes changes endeavouring to move the current state towards the specified state. Illustrations of controllers that dispatch with Kubernetes nowadays are the replication controller, endpoints controller, namespace controller, and benefits accounts controller.

Cloud-controller-manager

inter-atomics with the basic cloud provider. Since cloud suppliers create and discharge at a distinctive pace compared to the Kubernetes extend, abstracting the provider-specific code to the `cloud-controller-manager` parallel permits cloud sellers to advance autonomously from the centre Kubernetes code. The cloud-controller-manager can be connected to any cloud supplier that fulfils cloud suppliers. Interface. In the previous compatibility, the cloud-controller-manager has given in the centre Kubernetes venture employments the same cloud libraries as a Kube-controller-manager. Cloud suppliers as of now upheld in Kubernetes centre are anticipated to utilize the in-tree cloud-controller-manager to move out of Kubernetes centre.

Node Components

Unlike Master, Components are usually have run by a single node (unless High Availability Setup is explicitly stated), node components run on every node

Kubelet

Operator running on the hub to examine the holder wellbeing and report to the ace as well as tuning in to modern commands from the Kube-API server. The kubelet is the essential “hub agent” that runs on each hub. It can enrol the hub with the API server utilizing one of the hostnames. A hail abrogates the hostname or rationale for a cloud provider. The kubelet works in terms of PodSpec. A PodSpec is a YAML or JSON protest that portrays a Case. The kubelet takes a set of PodSpecs that have given through different components (fundamentally through the API server) and guarantees that the holders depicted in those PodSpecs are dynamic and sound. The kubelet doesn’t oversee holders that were not made by Kubernetes.

Kube-Proxy

keeps up the arrangement rules. The Kubernetes organize intermediary runs on each hub. This reflects administrations as characterized within the Kubernetes API on each hub and can-do straightforward TCP, UDP, and SCTP stream sending or circular robin TCP, UDP, and SCTP sending over a set of backends. Benefit cluster IPs and ports are right now found through Docker-links-compatible environment factors indicating ports opened by the benefit intermediary. There’s a discretionary Addon that gives cluster DNS for these cluster IPs. The client must make a benefit with the API server API to arrange the intermediary.

Container runtime

A program for organizing the containers (e.g. Docker, rkt, runc). To work in containers in Pods, Kubernetes work with a container runtime. A blemish was found within the way runc dealt with framework record descriptors when running containers. A malevolent container seems to utilize this imperfection to overwrite substance of the runc double and thus run subjective commands on the holder have system.

Cgroup drivers

At what time systemd is selected as the init framework for Linux dissemination, the init prepare creates and expends a core organizing group (cgroup) and acts as a cgroup director. Systemd has tense integration with cgroups and will assign cgroups per route. It’s conceivable to arrange your container runtime and the kubelet to utilize cgroupfs. Utilizing cgroupfs nearby systemd implies that there will be two distinctive cgroup directors.

That’s all in this secession we have seen Kubernetes components.

If you want to install kubernetes cluster on CentOS 7 VM you can click on below link

Hello . i see that you have good daily visits on your blog , try to join our trusted ad network and make more extra money and get weekly payment >>> https://cutt.ly/adnetwork

It¦s actually a great and useful piece of information. I¦m satisfied that you shared this helpful info with us. Please keep us up to date like this. Thank you for sharing.

Hello! I know this is kind of off topic but I was wondering if you knew where I could find a captcha plugin for my comment form? I’m using the same blog platform as yours and I’m having problems finding one? Thanks a lot!